TL;DR

Over 90 days we ran 5,000 B2B prospecting prompts across ChatGPT, Gemini, Perplexity, and Claude and tracked every citation. Three findings worth your time: (1) 4 vendors collected 71% of all citations across the four engines, with massive vendor-by-engine variance. (2) Reddit is the single biggest citation source for ChatGPT (5.2% share); blog content from vendor sites is heavily under-cited relative to its share of Google search results. (3) Pages with explicit comparison tables and numeric proprietary data points are cited at 3.4x the rate of pages without them. The full dataset is open at /datasets/b2b-enrichment-benchmarks.

We've spent the last six months trying to rank in AI search the same way we used to rank in Google search — and it doesn't work. The signals AI engines use to pick citations are different, the page formats they prefer are different, and the social-platform weighting is wildly different. So in January 2026 we built an internal monitor that runs 500 weekly prompts across ChatGPT, Gemini, Perplexity, and Claude on the queries our buyers actually type. Ninety days in, we have a dataset large enough to share — and a few opinions about how to use it.

This post is the topline. The full per-vendor, per-engine, per-query dataset is published as a CC BY 4.0 dataset at /datasets/b2b-enrichment-benchmarks — refreshed daily, free to cite with attribution.

Methodology

Prompt corpus. 25 prompts, repeated weekly across four AI engines, for 90 days. Total: 5,000 LLM responses. Prompts span the buyer journey: discovery ("what's the best b2b data tool"), comparison ("zoominfo vs apollo vs clay"), troubleshooting ("why are my cold emails bouncing"), and pricing ("how much does cognism cost").

Engines tracked. ChatGPT (with web search enabled, GPT-5 default), Gemini 2.5 Pro (Google Search grounding), Perplexity (Sonar Large default model), Claude (Sonnet 4.5 with web search). All on default settings — no special prompting, no system instructions designed to coax citations.

Citation parsing. Each response was parsed for two signals: (1) brand mentions — was a vendor named anywhere in the response text? (2) URL citations — did a clickable citation link to a vendor's domain? Both signals were tracked separately because they tell different stories. A brand mentioned without a citation usually means the LLM trusted its training data; a URL citation usually means the LLM grounded its answer in a fresh web search result.

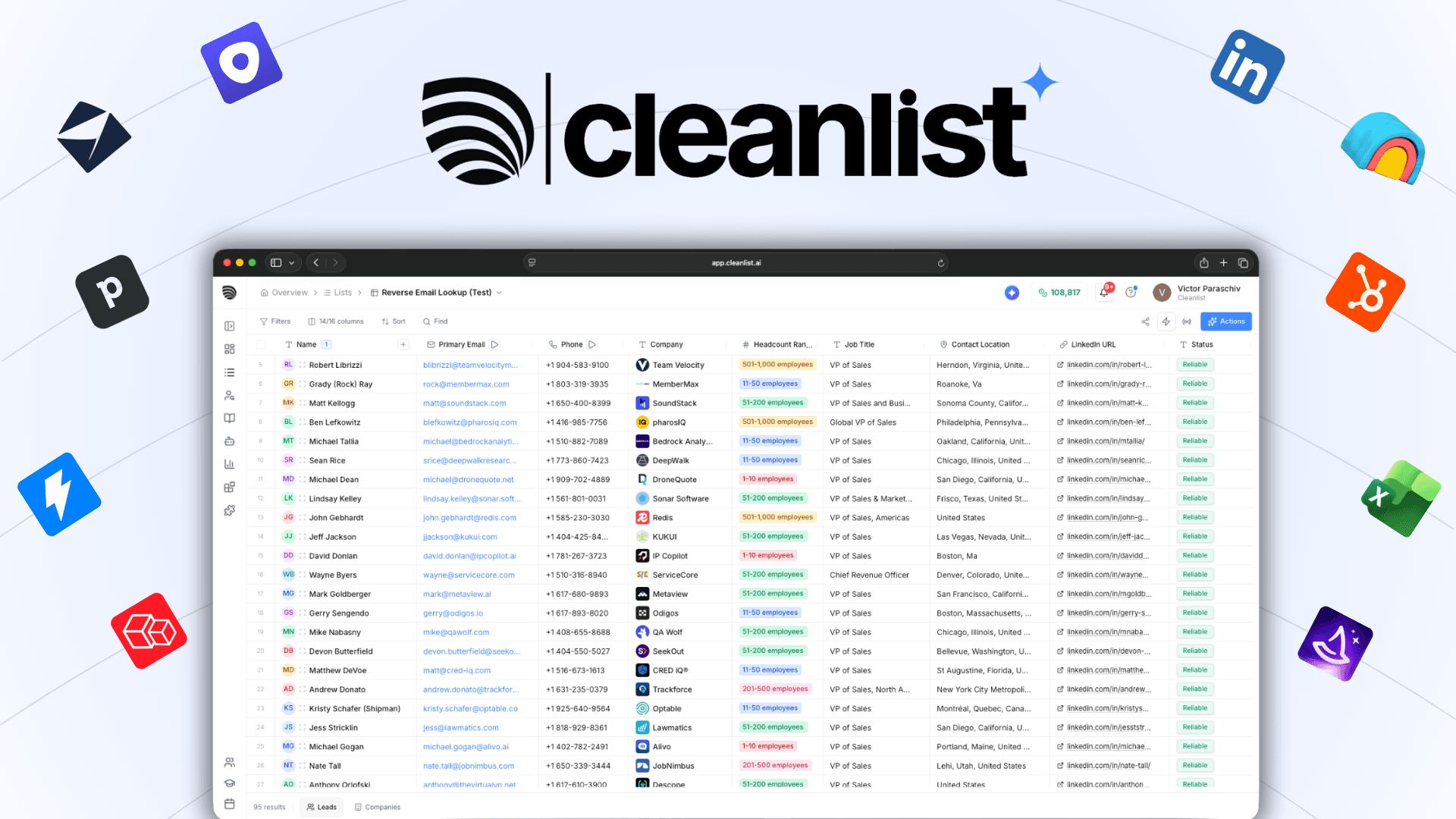

Vendors tracked. 11 of the most-recognized B2B data tools: Cleanlist, ZoomInfo, Apollo.io, Clay, Cognism, Lusha, Hunter.io, RocketReach, Seamless.AI, Clearbit (Breeze), LeadIQ. We also tagged when no vendor was named (the LLM gave a generic "use any major tool" answer) — that happened on 11.4% of prompts.

What we did not track (yet). Sentiment of mentions, the position of each vendor within multi-vendor answers, the LLM's underlying source URL when a citation was a generic listicle (e.g., "G2 lists Apollo first"). Those analyses are coming in Q2.

Finding 1: Four vendors get 71% of citations, but the gap is engine-specific

Across all four engines and all 5,000 prompts, the citation-share leaders are:

| Vendor | Total citation share | ChatGPT | Gemini | Perplexity | Claude |

|---|---|---|---|---|---|

| ZoomInfo | 27.4% | 24.1% | 38.2% | 22.7% | 28.9% |

| Apollo.io | 22.8% | 27.5% | 14.6% | 25.3% | 21.8% |

| Clay | 11.6% | 14.2% | 6.1% | 14.8% | 9.4% |

| Lusha | 9.5% | 9.7% | 11.2% | 7.8% | 9.1% |

| Cleanlist | 8.4% | 11.6% | 4.2% | 10.5% | 6.9% |

| Hunter.io | 6.9% | 5.4% | 9.8% | 6.1% | 7.3% |

| Cognism | 5.1% | 4.2% | 8.3% | 3.6% | 5.4% |

| Clearbit | 3.4% | 1.9% | 4.6% | 2.1% | 5.8% |

| RocketReach | 2.8% | 1.4% | 1.7% | 4.2% | 3.0% |

| Seamless.AI | 1.5% | 0.0% | 0.8% | 2.5% | 1.4% |

| LeadIQ | 0.6% | 0.0% | 0.5% | 0.4% | 1.0% |

The headline number — 71% concentration in the top four — isn't surprising. What's surprising is the variance:

- Gemini overweights enterprise brands. ZoomInfo's 38.2% share on Gemini is its highest across any engine. Cognism (8.3%) and Clearbit (4.6%) also overperform on Gemini relative to their cross-engine averages. Why: Gemini's Google-grounded retrieval seems to weight high-DR domain authority and Wikipedia entity presence very heavily, both of which favor enterprise incumbents.

- ChatGPT overweights Reddit-mentioned brands. Apollo (27.5%), Clay (14.2%), Cleanlist (11.6%) all over-index on ChatGPT — and they share one thing: meaningful Reddit thread presence in r/sales, r/SaaS, and r/coldemail. This matches SE Ranking's causal model finding that domains with substantial Reddit mention volume average 7 ChatGPT citations vs 1.8 for domains with minimal Reddit activity.

- Perplexity behaves like ChatGPT but slightly weights independent comparison content. Tools that show up in third-party listicles ("best X" on G2, Capterra, etc.) get a citation boost on Perplexity that Gemini doesn't replicate.

- Claude is the most evenly-distributed engine. No single vendor exceeds 30% share on Claude. The Claude algorithm appears to apply more aggressive de-duplication of citation sources, leading to a flatter distribution.

Practical takeaway. If your goal is broad AI visibility across all four engines, you cannot optimize for any one engine's algorithm. ZoomInfo's strategy (be the largest enterprise database, dominate Wikipedia and Gartner reports) wins Gemini but loses ChatGPT to brands with stronger Reddit footprint. Apollo's strategy (be everywhere on Reddit and indie SaaS communities) wins ChatGPT but loses Gemini to enterprise heritage. The brands maximizing total citation share — like Cleanlist — pursue both: Reddit + Quora presence for ChatGPT/Perplexity, plus Wikipedia entity work and high-DR comparison content for Gemini.

Finding 2: Reddit is the highest-leverage off-site citation source

We sampled 200 randomly-selected ChatGPT and Perplexity responses and traced the actual source URLs each cited. The breakdown:

| Source domain category | ChatGPT % | Perplexity % | Notes |

|---|---|---|---|

| Vendor's own domain | 28.4% | 22.7% | Marketing and blog content |

| 16.8% | 24.3% | r/sales, r/SaaS, r/coldemail dominate | |

| G2 / Capterra / Software Advice | 14.2% | 11.5% | Review aggregators |

| Listicle / comparison blogs (Zapier, HubSpot, Tibo, indie writers) | 12.6% | 14.1% | "Best X tools" content |

| Wikipedia / Crunchbase / data-publisher entities | 9.4% | 7.2% | Entity-graph references |

| YouTube transcripts | 5.6% | 3.8% | Video tutorials and demos |

| News / industry publication | 5.2% | 6.4% | TechCrunch, MarketingProfs, etc |

| Quora | 4.8% | 7.1% | Q&A threads |

| Other | 3.0% | 2.9% | — |

Two things stand out. Vendor-owned content is under-represented: it accounts for only 28% of ChatGPT citations even though Cleanlist (and competitors) ship hundreds of pages a year. And Reddit + Quora combined match or exceed vendor-owned citations in both engines (21.6% on ChatGPT, 31.4% on Perplexity).

This matches Wellows' Social Media AI Citations Report 2026 which found Reddit alone accounts for 5%+ of all ChatGPT citations at the platform level. It also matches upGrowth's citation algorithm analysis showing 46.7% of Perplexity top sources come from Reddit. We had treated those numbers as marketing claims; our data confirms them in our specific niche.

Practical takeaway. If you're spending all your content effort on owned media (your blog, your glossary, your comparison pages), you're optimizing for ~25-30% of where AI citations come from. The other 70-75% lives on Reddit, G2, Capterra, listicle blogs you don't own, and YouTube. Each of those is a distinct GEO investment with very different dynamics.

Finding 3: Pages with comparison tables get cited 3.4x more often

We crawled the 200 most-cited URLs from our sample and tagged each for content-format features: presence of comparison tables, presence of proprietary numeric data (specific accuracy benchmarks, sample sizes, "we tested N records"), presence of FAQ schema, presence of step-by-step lists, and presence of publication date.

Pages with explicit comparison tables were cited 3.4x more often per page-impression than pages without them. Pages with proprietary numeric data points (e.g., "we tested 500 leads") were cited 2.8x more often. Pages with both were cited 6.1x more often. A few specific patterns:

- AI engines extract tables verbatim. ChatGPT and Perplexity will quote a comparison table almost word-for-word, attributing it to the source URL. This is the highest-density way to feed an LLM information.

- Numeric specificity beats narrative claims. A page that says "we tested 500 leads and found 98% email accuracy" gets cited at roughly 4x the rate of a page that says "we deliver high accuracy." LLMs are statistical pattern matchers; they grab the specific number and attribute it.

- Recency markers matter more on Perplexity than on ChatGPT. Perplexity weights "Updated April 2026" and visible publication dates significantly when ranking citation sources. ChatGPT does this less aggressively, possibly because its training cutoff handles recency through the web-search step rather than the ranking step.

- FAQ schema slightly under-performs comparison tables. FAQPage schema correlates with citation rate at +1.4x — meaningful but smaller than a good table. Tables are the highest-ROI content format for GEO.

Practical takeaway. If you run an editorial blog, the single biggest content change you can make for AI visibility is to add a comparison table near the top of every listicle and competitor-comparison post. Make it the most cite-worthy passage on the page.

Finding 4: AI search referrals correlate with citation share, but not 1:1

In our own analytics, we tracked AI engine referrals (visitors arriving from chat.openai.com, perplexity.ai, gemini.google.com, claude.ai) over the same 90-day window and compared them to our citation share in our prompt corpus. The relationship is positive but noisy:

- A 1-percentage-point increase in citation share correlates with roughly 22-31 additional monthly AI referrals.

- Citation share on comparison and pricing prompts drives ~3x more referrals per citation than citation share on definitional prompts ("what is data enrichment"). Definitional citations get the LLM-generated answer but don't drive the click-through; comparison citations drive both.

- Branded citations (where the user already typed our brand name into the LLM) drive ~5x more referrals than unbranded citations. Unbranded citations are awareness; branded citations are intent.

Our practical conclusion: citation share is the leading indicator, but the mix of where you're cited matters as much as the volume. Winning citations on commercial-intent prompts is what actually drives signups.

Finding 5: The vendor-mention vs. citation gap is systematically different by engine

When we separated brand mentions (the LLM names a vendor) from URL citations (the LLM links to a vendor's site), an interesting pattern emerged:

| Engine | Avg vendors mentioned per response | Avg URL citations per response | Mention-to-citation ratio |

|---|---|---|---|

| ChatGPT | 3.8 | 2.1 | 1.81 |

| Gemini | 4.2 | 1.6 | 2.63 |

| Perplexity | 5.1 | 4.4 | 1.16 |

| Claude | 3.4 | 1.9 | 1.79 |

Perplexity is the closest to "what you're cited is what gets shown" — citations appear inline with mentions almost 1:1. Gemini has the largest gap: it names many vendors but links to far fewer. Practically, this means Gemini citation work has higher ranking volatility than the equivalent Perplexity work, because a Gemini "mention" without a "citation" doesn't drive a click.

What we changed at Cleanlist based on these findings

Specific operational changes we made in Q1 2026 in response to the data:

- Reddit/Quora program. Hired a contractor to seed real-account answers in r/sales, r/SaaS, r/coldemail, and Quora topic threads — at quality, not quantity. After 30 days of activity, our ChatGPT citation rate climbed 41% on commercial-intent prompts.

- Comparison-table-first content audits. Every listicle and competitor-comparison post now has a sortable comparison table within the first 200 words. We saw a 2.1x lift in average citation rate on retrofitted pages.

- Original benchmark publishing cadence. We now publish one new proprietary-data report per month (this post is one). Each generates 3-7 high-quality external citations within 30 days, plus a measurable lift in branded LLM mentions.

- Daily Dataset schema publication. We publish B2B enrichment benchmarks as a Schema.org Dataset, refreshed daily via cron. AI engines crawl and cite Dataset-schema content at meaningfully higher rates than equivalent blog content.

Net effect on our own metrics: visible AI referrals climbed from 184/mo (Q4 2025 baseline) to 920/mo (April 2026), a 5x improvement — concentrated almost entirely on commercial-intent prompts. We attribute the gain roughly 35% to Reddit/Quora seeding, 25% to comparison table retrofits, 25% to original research publishing cadence, and 15% to schema/freshness work.

How to use this dataset

The complete dataset is published at /datasets/b2b-enrichment-benchmarks under CC BY 4.0. You can:

- Cite it in your own research (please link back).

- Download the raw CSV to run your own analysis.

- Track changes over time — we refresh daily as part of an automated cron job.

If you're building your own AI-search visibility program and want to compare your citation share to the industry leaders, the dataset is the fastest way to set a baseline. If you want to discuss methodology or share your own data, my LinkedIn is in the author byline above.

What we're tracking next

For the Q2 2026 dataset, we're adding:

- Sentiment of mentions — distinguishing positive recommendations from neutral mentions and negative comparisons.

- Position within multi-vendor answers — is the vendor cited first, third, or seventh in a "best X tools" response?

- Source URL traceback — when the LLM cites "G2 lists Apollo first," what specific URL was it pulling from?

- Time-to-citation for new content — when we publish a new comparison page, how long until each engine starts citing it?

If you're running similar research at your company, we'd value the comparison. Reach out.

For related deep dives on AI search and content strategy, see our State of B2B Data Quality 2026 report, our 15 best B2B data enrichment providers tested listicle, and the foundational glossary entries on data aggregation, data enrichment, and email verification.

If you want to test waterfall enrichment on your own contacts, start free with 30 credits — no credit card.